Voice technology once felt invisible a utility powering call centers, virtual assistants and transcription tools. Today, it sits at the center of global privacy debates. What changed is simple: voice is no longer viewed as just audio. Regulators increasingly treat it as biometric information capable of identifying individuals, inferring emotions and revealing behavioral patterns. This shift has major implications for AI teams. Voice recordings can contain identity markers, health clues, demographic signals and contextual data all wrapped into a single stream. As a result, organizations working with speech AI must now navigate complex AI data regulations that extend far beyond traditional data protection rules.

For many companies, the wake-up call comes during enterprise procurement or legal review. Questions that once focused on model accuracy now focus on risk:

- Is the dataset sourced with verifiable consent?

- Does it meet cross-border privacy requirements?

- Can we prove lawful processing under regional laws?

- What happens if regulators request deletion?

These concerns fall under the broader umbrella of voice data compliance, which has quickly become a prerequisite for deploying AI systems at scale.

European law illustrates the shift clearly. Under GDPR, biometric data used for identification receives heightened protection. That means many speech technologies, especially speaker verification and voice biometrics fall into sensitive categories of GDPR voice data processing. Non-compliance can lead not only to fines but also to forced deletion of datasets, undermining years of model development. But regulation is only part of the story. Public awareness is rising too. Users increasingly expect transparency about how their voices are collected, stored and reused. Trust is becoming a competitive differentiator, particularly in sectors like finance, healthcare and telecommunications.

This is where ethical voice data becomes strategically important. Ethical sourcing practices clear consent, fair compensation, transparency of use and secure handling reduce both legal exposure and reputational risk. They also future-proof AI systems as laws continue to evolve. Forward-looking organizations treat compliance not as a checkbox but as a design principle. By embedding ethical data governance into dataset creation, documentation and lifecycle management, teams can innovate with confidence rather than caution.

In short, voice has moved from background input to a high-risk biometric asset. For global AI builders, understanding this shift is no longer optional; it is foundational to building systems that are lawful, trusted and sustainable in a rapidly tightening regulatory environment.

Here’s the bottom line: in the era of biometric AI, innovation without governance is a liability.

Voice Data as Biometric Data

Voice is no longer just sound in modern AI systems, it can function as a fingerprint. When audio is processed to recognize or verify a person, regulators treat it as biometric data. Under GDPR voice data rules, this places voice in a highly sensitive category that requires strict safeguards, explicit consent and clear purpose limitation.

For AI teams, this means speech datasets are not merely training inputs they can become identity systems, often unintentionally.

What Makes Voice Biometric?

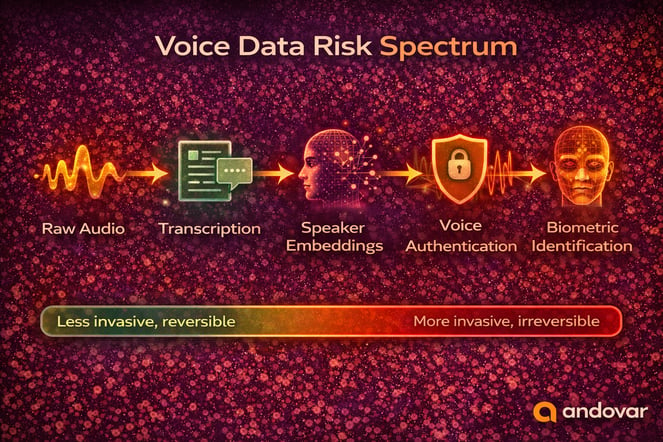

Voice becomes biometric when it can uniquely identify an individual. This typically happens through speaker recognition technologies that extract distinctive vocal features. Key elements that make voice biometric include:

- Voiceprints and speaker recognition — Mathematical models link recordings to a specific person

- Behavioral + physiological traits — Pitch, cadence, accent and vocal tract characteristics

- Permanent identifiers — Unlike passwords, voices cannot be easily changed after compromise

Anonymization is particularly difficult. Even without names or transcripts, a voice recording may still identify someone. Background noise, speech patterns, or embeddings can enable re-identification especially in smaller language communities. From a compliance standpoint, this is why collecting audio without strong governance can quietly escalate risk.

| Use Case | Identifiability | Regulatory Exposure | Example |

| Transcription | Low–Medium | Moderate | Speech-to-text |

| Analytics | Medium–High | High | Sentiment scoring |

| Speaker Recognition | High | Very High | Call center verification |

| Voice Authentication | Extreme | Critical | Banking login |

Why Regulators Treat Biometric Voice Data as High Risk

Authorities consider biometric voice data sensitive because misuse can cause lasting harm.

Major concerns include:

- Identity theft and impersonation using cloned or stolen voices

- Surveillance risks through large-scale speaker tracking

- Exposure of personal traits, including health or emotional state

Enforcement trends show regulators increasingly scrutinizing not just data collection but how audio is analyzed and reused. Organizations must demonstrate clear voice data compliance, secure handling and purpose limitation. In practice, strong ethical voice data practices and robust ethical data governance are essential for meeting modern AI data regulations and maintaining trust.

Each step increases identifiability, regulatory exposure, and potential privacy risk.

Key Regulations Affecting Voice Data

As voice increasingly qualifies as biometric information, regulatory exposure expands across jurisdictions. Organizations working with global datasets must navigate overlapping privacy laws and evolving AI data regulations not just local compliance.

Below are the most influential frameworks shaping voice data compliance today.

GDPR (European Union)

The General Data Protection Regulation remains the global benchmark. Under GDPR voice data provisions, biometric data used for identification is classified as a special category of personal data. This means:

- Explicit consent is often required

- Purpose limitation must be clearly defined

- Data minimization principles apply

- Strong technical and organizational safeguards are mandatory

Cross-border transfers outside the EU require additional legal mechanisms, making multinational AI training pipelines more complex. Non-compliance can lead to substantial fines and forced deletion of datasets.

PDPA (Singapore and Other APAC Frameworks)

Several Asia-Pacific countries operate under Personal Data Protection Act (PDPA) models. While requirements vary, common themes include:

- Clear consent obligations

- Limits on secondary use

- Transfer restrictions for overseas processing

For multilingual AI systems sourcing data across Southeast Asia, documentation and consent clarity are critical components of ethical data governance.

CCPA / CPRA (California, United States)

California’s privacy framework treats biometric information, including voiceprints as protected personal information. Consumers have rights to:

- Access collected data

- Request deletion

- Opt out of certain data uses

For companies serving U.S. markets, this reinforces the need for structured ethical voice data lifecycle management.

Emerging AI Regulations

Beyond privacy laws, governments are introducing AI-specific regulations. Risk-based frameworks increasingly classify biometric identification systems as high-risk applications.

This means compliance is no longer just about storage and consent, it may require:

- Impact assessments

- Transparency reporting

- Human oversight mechanisms

For AI builders, the regulatory environment is converging toward stricter scrutiny of biometric systems. Proactive voice data compliance is no longer a reactive legal defense, it is a strategic infrastructure.

| Regulation | Region | Biometric Classification | Consent Level | Cross-Border Controls | Risk Level |

| GDPR | EU | Special Category | Explicit Required | Strict Safeguards | Very High |

| PDPA | Singapore/APAC | Contextual | Clear Consent | Transfer Restrictions | High |

| CCPA/CPRA | California | Voiceprints Protected | Notice + Opt-Out | Moderate | Medium–High |

| Emerging AI Laws | Global | Often High-Risk | Impact Assessment | Varies | Increasing |

Compliance Challenges

Even organizations that understand regulations on paper often struggle when applying them to real-world AI pipelines. Voice datasets rarely stay within one jurisdiction, one storage system or one legal framework. In practice, voice data compliance becomes an operational challenge, not just a legal one.

Cross-Border Transfers

Voice data projects are almost always global. A dataset might be collected in Southeast Asia, annotated in Europe and used to train models hosted in the United States. Each movement of data can trigger new obligations under regional AI data regulations.

Under European law, transferring GDPR voice data outside the EU requires “adequate safeguards,” such as Standard Contractual Clauses or approved transfer mechanisms. Similar restrictions exist across many regions, though with different legal tests.

From our experience at Andovar, cross-border compliance is especially complex for multilingual projects involving low-resource languages. Contributors may be located in countries with emerging or fragmented privacy regimes, making documentation essential.

Without a clear transfer strategy, organizations risk:

- Regulatory penalties

- Data localization conflicts

- Forced suspension of AI deployments

- Contractual disputes with enterprise clients

In short, moving data across borders is not just logistics it is a compliance event.

Consent Documentation

Consent is often treated as a checkbox. Regulators treat it as evidence.

For biometric voice collection, organizations must demonstrate that contributors understood:

- What data was collected

- How it would be used

- Who would access it

- How long it would be retained

- Whether it would train AI systems

If this information changes later, the original consent may no longer be valid.

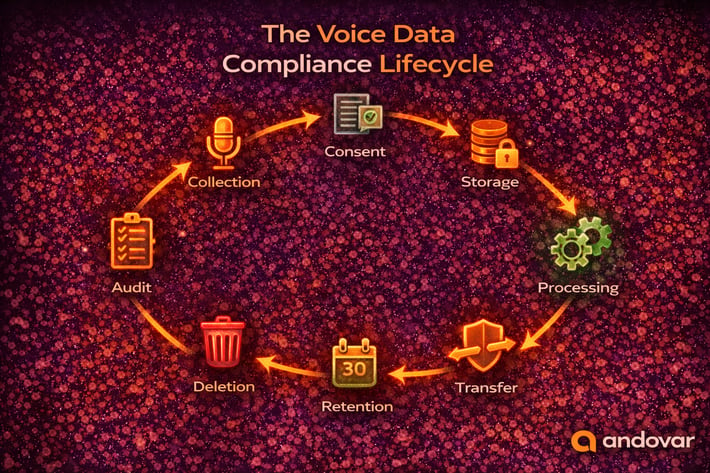

Robust ethical data governance requires consent records that are traceable, auditable and version-controlled. This is particularly important for long-term datasets reused across multiple AI projects.

Data Retention Limits

Keeping data “just in case” is one of the biggest compliance risks.

Many regulations require organizations to retain personal data only as long as necessary for the stated purpose. For biometric information, regulators often expect stricter justification.

Retention policies should address:

- Storage duration

- Archival procedures

- Secure deletion methods

- Conditions for reuse

Failure to define lifecycle rules undermines claims of using ethical voice data and can expose organizations to enforcement actions.

Ethical Data as Risk Mitigation

Compliance is often framed as a defensive necessity, something organizations do to avoid penalties. But in the context of biometric speech systems, ethical practice is far more than legal protection. It is a long-term risk mitigation strategy. When companies adopt structured ethical voice data frameworks, they reduce regulatory exposure, operational disruption and reputational harm simultaneously. This is particularly important as AI data regulations evolve and enforcement becomes more proactive rather than reactive.

Traceability

One of the most overlooked safeguards in voice AI is traceability. Many organizations can confirm that they collected consent but struggle to prove which version of consent applied to a specific dataset or whether that dataset was later repurposed.

True traceability means being able to map every audio file to:

- Its collection context

- The consent version agreed to

- The jurisdictions involved

- The intended and approved use cases

Without that visibility, compliance becomes guesswork.

Under modern GDPR voice data expectations and similar frameworks globally, regulators increasingly expect documented processing histories. If a dataset has moved across borders, been re-annotated, or retrained into a new model, that journey must be defensible. Traceability transforms compliance from assumption into evidence.

Audit Readiness

Audit readiness is not about waiting for a regulator to knock. It is about being structurally prepared.

Organizations practicing strong ethical data governance maintain centralized documentation, clear data flow diagrams, retention policies and access controls. They know where voice data resides, who can access it and when it must be deleted.

When enterprise clients conduct due diligence, which is increasingly common, this readiness accelerates procurement rather than delaying it. Instead of scrambling for documentation, teams can demonstrate structured voice data compliance processes from day one. In competitive AI markets, this operational maturity becomes a differentiator.

Enterprise Trust

Perhaps the most strategic benefit of ethical governance is trust. Enterprises deploying speech AI in finance, healthcare or telecommunications are risk-sensitive. They cannot afford regulatory exposure through poorly governed datasets. Demonstrating responsible sourcing, transparent consent mechanisms, secure storage and lifecycle management signals stability. It shows that innovation is built on responsible foundations.

In today’s regulatory climate, ethical voice data is not just about avoiding fines. It is about enabling sustainable AI deployment. Organizations that invest in governance early avoid costly redesigns later. Ethics and compliance, when embedded into system design, do not slow innovation — they stabilize it. And in a landscape defined by tightening AI data regulations, stability is a strategic asset.

| Dimension | Ethical Voice Data | Risky Practice |

| Consent | Explicit & documented | Vague / implied |

| Transparency | Clear purpose | Undefined usage |

| Retention | Defined lifecycle | Indefinite storage |

| Traceability | Source tracked | Unknown origin |

| Enterprise Acceptance | High | Low |

Best Practices for Voice Data Compliance

Meeting regulatory requirements isn’t a one-and-done task. It’s an ongoing discipline that should be woven into the entire lifecycle of voice AI from the moment a contributor speaks into a microphone to the day the dataset is retired. In our experience supporting global AI teams, organizations that treat voice data compliance as core infrastructure move faster and face fewer surprises later.

When governance is bolted on at the end, projects stall. When it’s built in from the start, compliance becomes an enabler of scale rather than a roadblock.

Consent Versioning

Consent isn’t static. Project scopes evolve, datasets get reused and new jurisdictions come into play. Someone who agreed to provide recordings for transcription training may not have agreed to biometric authentication or emotion analysis later. To maintain ethical voice data standards, teams must track exactly what contributors agreed to and when. This is especially important when datasets live for years and pass through multiple use cases.

Minimal but essential practices include:

- Linking each dataset to the specific consent form version used at collection

- Recording permitted uses, geographic scope, and retention terms

- Flagging when new use cases require renewed consent

- Maintaining audit-ready records that can be produced on request

Proper versioning supports defensibility under GDPR voice data rules, where organizations must prove lawful processing rather than simply claim it.

More than 60% of users say they would stop using AI products that mishandle personal data.

Secure Storage

Because voice can uniquely identify individuals, it deserves protections similar to other biometric data. A compromised password can be changed; a compromised voiceprint cannot. Security, therefore, is not just IT hygiene — it is central to ethical data governance. Protection must extend across the entire storage ecosystem, especially in distributed AI pipelines.

Key safeguards typically include:

- Encryption for data at rest and in transit

- Strict role-based access controls

- Continuous monitoring and access logging

- Segregation of raw audio from derived biometric features

- Controlled download and duplication policies

Strong storage practices reduce both regulatory exposure and reputational risk, particularly as AI data regulations tighten globally.

| Level | Characteristics | Risk Exposure |

| Level 1 – Basic | One-time consent | High |

| Level 2 – Managed | Stored records | Medium |

| Level 3 – Versioned | Linked to dataset | Low |

| Level 4 – Auditable | Full traceability | Very Low |

Regulatory Alignment by Design

The most resilient organizations design projects to meet compliance obligations before data collection even begins. Instead of retrofitting controls later, they build processes that inherently satisfy legal expectations. This “alignment by design” approach is especially valuable for multinational speech programs spanning multiple legal regimes. A unified governance model can adapt to local requirements without fragmenting operations.

Practical elements often include:

- Data minimization built into collection protocols

- Clear purpose limitation defined at project kick-off

- Transparent participant information notices

- Predefined retention and deletion schedules

- Cross-border transfer mechanisms planned in advance

From our experience working with multilingual and low-resource language datasets, this upfront planning prevents costly rework and deployment delays.

Ethics and Compliance Are Inseparable

If there’s one lesson we’ve learned from supporting global speech AI initiatives, it’s this: you cannot separate ethics from compliance. Regulations define the minimum acceptable standard, but responsible organizations operate above that baseline. In the long run, projects grounded in ethical voice data practices prove more resilient, scalable, and trustworthy.

Voice data is uniquely sensitive because it sits at the intersection of identity, behavior, and biometric authentication. Once collected, it can power technologies ranging from accessibility tools to surveillance systems. That dual-use nature is precisely why regulators worldwide are tightening oversight through expanding AI data regulations.

Compliance alone may keep you legal today, but ethics keeps you viable tomorrow.

“Organizations using audited, representative datasets report up to 30% fewer model failures post-deployment.”

Organizations that treat governance as a strategic capability — not a legal burden — gain tangible advantages. They experience fewer deployment delays, smoother enterprise procurement processes, and stronger public trust. Clients increasingly ask not just “Is this legal?” but “Is this responsible?”

In practice, inseparability shows up in several ways:

- Ethical collection practices make regulatory compliance easier to demonstrate

- Transparent consent builds contributor trust and reduces reputational risk

- Secure handling protects both individuals and organizational credibilityprivac

- Lifecycle governance prevents future legal exposure from legacy datasets

- Responsible sourcing strengthens enterprise confidence in AI outputs

From our perspective, robust voice data compliance frameworks are not obstacles to innovation. They are the scaffolding that allows innovation to scale safely across borders and industries.

Equally important is the recognition that GDPR voice data requirements and similar laws worldwide are converging toward a risk-based model. Systems involving biometric identification, profiling, or automated decision-making face heightened scrutiny. Ethical safeguards implemented early can prevent costly redesigns later.

Strong ethical data governance also signals maturity. It tells regulators, partners, and customers that your organization understands the societal implications of the technologies it builds — and takes them seriously.

Ultimately, AI systems trained on voice will only be as trustworthy as the data behind them. Cutting corners in data sourcing may accelerate short-term progress, but it undermines long-term sustainability.

Ethics and compliance are not two parallel tracks. They are the same road.

FAQs: Global Regulations and Ethical Voice Data

Q1. Is voice data considered personal data under privacy laws?

Yes — in most jurisdictions, voice recordings qualify as personal data because they can identify an individual directly or indirectly. When used for speaker recognition or authentication, voice becomes biometric data, which is subject to stricter controls. This is why organizations handling ethical voice data must apply safeguards similar to those used for fingerprints or facial recognition.

Q2. What makes GDPR particularly strict about voice data?

The EU treats biometric identifiers as a “special category” of personal data when used for identification. That means processing GDPR voice data typically requires explicit consent, strong security measures and a clearly defined purpose. Non-compliance can lead to significant penalties and orders to stop processing entirely.

Q3. Do companies need consent to collect voice recordings for AI training?

In most cases, yes — especially when recordings come from identifiable individuals. Consent must be informed, freely given and specific about how the data will be used. If the intended use changes, organizations may need to obtain renewed permission to maintain voice data compliance.

Q4. How do cross-border data transfers affect voice AI projects?

Moving datasets across regions can trigger additional legal requirements. Some countries restrict transfers unless adequate protections are in place. For multinational teams, managing these obligations is one of the biggest challenges under modern AI data regulations.

Q5. Can voice data be truly anonymized?

True anonymization is extremely difficult because vocal characteristics can remain identifiable even after removing names or transcripts. Techniques like voice transformation or aggregation can reduce risk, but regulators often still treat such data cautiously. This is a key concern in ethical data governance discussions.

Q6. How long can organizations keep voice data?

Retention should be limited to what is necessary for the stated purpose. Many regulations require deletion once that purpose is fulfilled, unless a new lawful basis exists. Keeping data indefinitely “just in case” creates compliance risks and undermines claims of responsible handling.

Q7. What industries face the highest scrutiny for voice data use?

Financial services, healthcare, telecommunications, and public-sector applications tend to face heightened oversight because misuse could lead to fraud, discrimination, or surveillance concerns. Systems involving biometric authentication are particularly sensitive.

Q8. Why is ethical sourcing important beyond legal compliance?

Even if data collection is technically lawful, unethical sourcing practices can damage reputation and trust. Enterprises increasingly evaluate suppliers based on responsible data practices, making ethical voice data a competitive differentiator rather than merely a compliance requirement.

Final Thoughts

Voice is becoming one of the primary interfaces between humans and machines. From virtual assistants to call center automation and biometric authentication, speech-enabled systems are shaping how organizations interact with customers worldwide. But the power of voice technology comes with responsibility. Unlike many other data types, voice carries identity, emotion, and behavioral signals all at once. Mishandling it can expose individuals to risks that cannot easily be reversed.

From our experience at Andovar, the organizations best positioned for long-term success are those that treat governance as foundational. They invest early in secure collection pipelines, transparent contributor engagement and structured lifecycle management. They design projects to satisfy global expectations rather than retrofitting compliance later.

Responsible handling of ethical voice data does more than prevent regulatory issues — it builds systems people can trust. And trust is the currency that determines whether AI adoption accelerates or stalls. As global AI data regulations continue to evolve, the gap between compliant and non-compliant organizations will widen. Those with mature frameworks will scale confidently across markets. Those without will face repeated friction, delays, and scrutiny.

In other words, ethical data practices are not slowing innovation. They are what make sustainable innovation possible.

Key Takeaways

- Voice recordings often qualify as biometric data, requiring heightened safeguards

- Global privacy laws impose strict obligations on collection, use and transfer

- Strong consent management is essential for lawful processing

- Retention policies and secure storage reduce long-term risk

- Traceability and audit readiness enable enterprise trust

- Proactive governance supports scalable global deployment

- Ethical voice data practices strengthen both compliance and reputation

About the Author: Steven Bussey

A Fusion of Expertise and Passion: Born and raised in the UK, Steven has spent the past 24 years immersing himself in the vibrant culture of Bangkok. As a marketing specialist with a focus on language services, translation, localization, and multilingual AI data training, Steven brings a unique blend of skills and insights to the table. His expertise extends to marketing tech stacks, digital marketing strategy, and email marketing, positioning him as a versatile and forward-thinking professional in his field....More